韩琦的作业二

代码

from selenium import webdriver

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

browser = webdriver.Chrome()

browser.get('https://www.szse.cn/disclosure/listed/fixed/index.html')

element = browser.find_element(By.ID, 'input_code') # Find the search box

element.send_keys('科陆' + Keys.RETURN)

browser.find_element(By.CSS_SELECTOR, "#select_hangye .glyphicon").click()

browser.find_element(By.LINK_TEXT, "制造业").click()

browser.find_element(By.CSS_SELECTOR, "#select_gonggao .c-selectex-btn-text").click()

browser.find_element(By.LINK_TEXT, "年度报告").click()

browser.find_element(By.LINK_TEXT, "2").click()

#browser.execute_script("window.scrollTo(0,0)")

element1 = browser.find_element(By.ID, 'disclosure-table')

innerHTML = element1.get_attribute('innerHTML')

f = open('innerHTML.html','w',encoding='utf-8')

f.write(innerHTML)

f.close()

browser.quit()

from bs4 import BeautifulSoup

import re

import pandas as pd

def to_pretty(fhtml):

f = open(fhtml,encoding='utf-8')

html = f.read()

f.close()

soup = BeautifulSoup(html)

html_prettified = soup.prettify()

f = open(fhtml[0:-5]+'-prettified.html', 'w', encoding='utf-8')

f.write(html_prettified)

f.close()

return(html_prettified)

html = to_pretty('innerHTML.html')

def txt_to_df(html):

# html table text to DataFrame

p = re.compile('(.*?)

', re.DOTALL)

trs = p.findall(html)

p2 = re.compile('(.*?)', re.DOTALL)

tds = [p2.findall(tr) for tr in trs[1:]]

df = pd.DataFrame({'证券代码': [td[0] for td in tds],

'简称': [td[1] for td in tds],

'公告标题': [td[2] for td in tds],

'公告时间': [td[3] for td in tds]})

return(df)

df_txt = txt_to_df(html)

p_a = re.compile('(.*?)', re.DOTALL)

p_span = re.compile('(.*?)', re.DOTALL)

get_code = lambda txt: p_a.search(txt).group(1).strip()

get_time = lambda txt: p_span.search(txt).group(1).strip()

def get_link(txt):

p_txt = '(.*?)'

p = re.compile(p_txt, re.DOTALL)

matchObj = p.search(txt)

attachpath = matchObj.group(1).strip()

href = matchObj.group(2).strip()

title = matchObj.group(3).strip()

return([attachpath, href, title])

def get_data(df_txt):

prefix = 'https://disc.szse.cn/download'

prefix_href = 'https://www.szse.cn/'

df = df_txt

codes = [get_code(td) for td in df['证券代码']]

short_names = [get_code(td) for td in df['简称']]

ahts = [get_link(td) for td in df['公告标题']]

times = [get_time(td) for td in df['公告时间']]

df = pd.DataFrame({'证券代码': codes,

'简称': short_names,

'公告标题': [aht[2] for aht in ahts],

'attachpath': [prefix + aht[0] for aht in ahts],

'href': [prefix_href + aht[1] for aht in ahts],

'公告时间': times

})

return(df)

df_data = get_data(df_txt)

df_data.to_csv('sample_data_of_002121.csv')

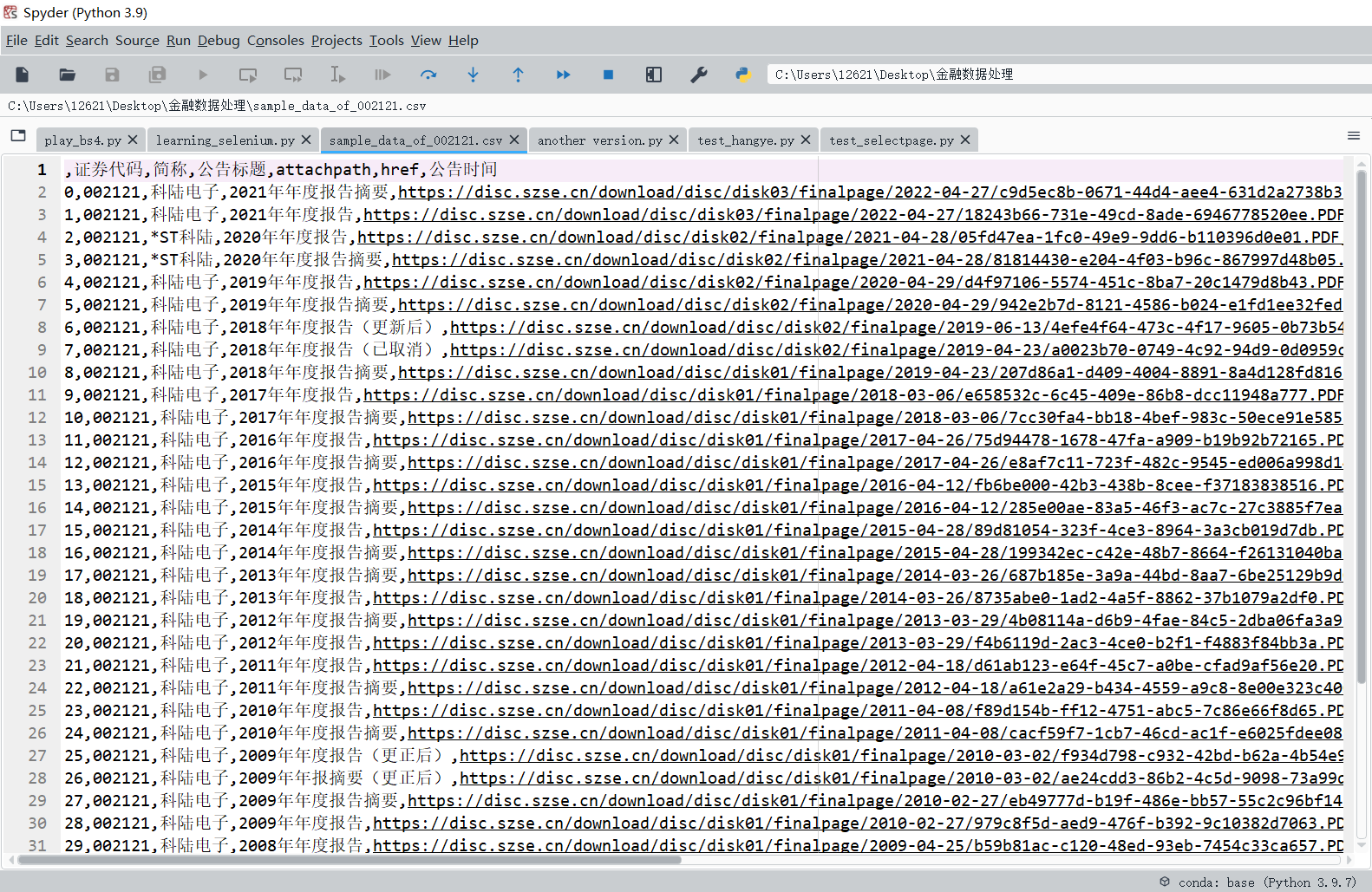

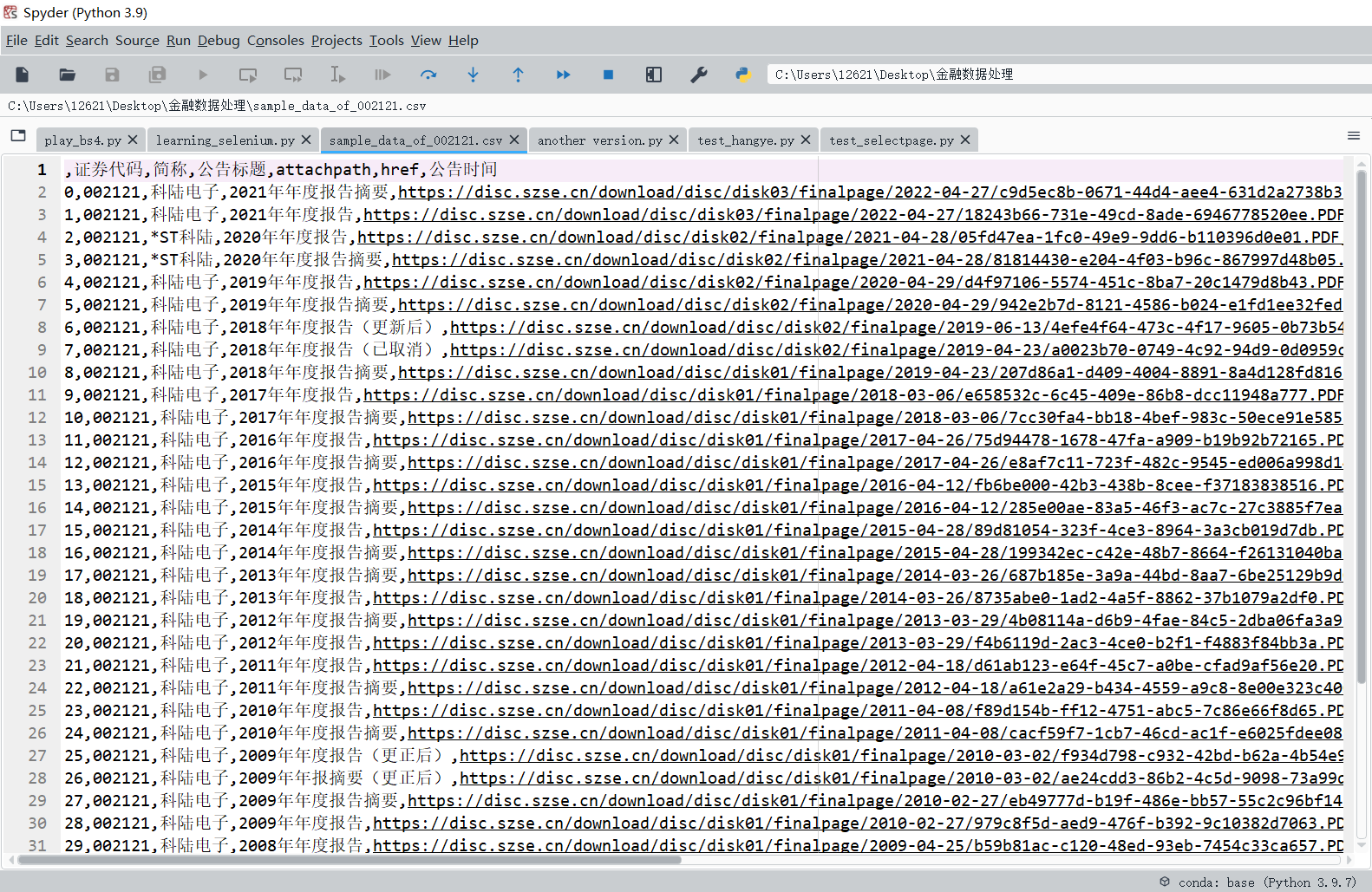

结果

解释

- 用selenium模拟操作,按企业名称、行业、年度报告对网页进行搜索,并获取网页源代码;

- 将爬取的网页源代码写入文件;

- 定义函数to_pretty(),对innerHTML进行处理,让每个html标签都独占一行;

- 定义函数txt_to_df(),利用正则表达式对相应的标签对进行搜索,找出“证券代码”、“简称”、“公告标题”、“公告时间”的对应内容;

- 定义函数get_link(),利用正则表达式对相应的标签对进行搜索,找出“attachpath”、“href”、“title”的对应内容;

- 定义函数get_data(),讲列表的名称与数据一一对应;

- 将数据处理结果写入'sample_data_of_002121.csv'文件。